Published On Oct 17, 2020

#ai #research #attention

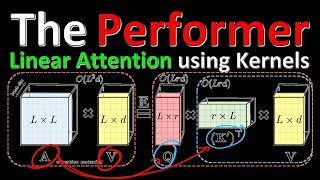

Transformers, having already captured NLP, have recently started to take over the field of Computer Vision. So far, the size of images as input has been challenging, as the Transformers' Attention Mechanism's memory requirements grows quadratic in its input size. LambdaNetworks offer a way around this requirement and capture long-range interactions without the need to build expensive attention maps. They reach a new state-of-the-art in ImageNet and compare favorably to both Transformers and CNNs in terms of efficiency.

OUTLINE:

0:00 - Introduction & Overview

6:25 - Attention Mechanism Memory Requirements

9:30 - Lambda Layers vs Attention Layers

17:10 - How Lambda Layers Work

31:50 - Attention Re-Appears in Lambda Layers

40:20 - Positional Encodings

51:30 - Extensions and Experimental Comparisons

58:00 - Code

Paper: https://openreview.net/forum?id=xTJEN...

Lucidrains' Code: https://github.com/lucidrains/lambda-...

Abstract:

We present a general framework for capturing long-range interactions between an input and structured contextual information (e.g. a pixel surrounded by other pixels). Our method, called the lambda layer, captures such interactions by transforming available contexts into linear functions, termed lambdas, and applying these linear functions to each input separately. Lambda layers are versatile and may be implemented to model content and position-based interactions in global, local or masked contexts. As they bypass the need for expensive attention maps, lambda layers can routinely be applied to inputs of length in the thousands, en-abling their applications to long sequences or high-resolution images. The resulting neural network architectures, LambdaNetworks, are computationally efficient and simple to implement using direct calls to operations available in modern neural network libraries. Experiments on ImageNet classification and COCO object detection and instance segmentation demonstrate that LambdaNetworks significantly outperform their convolutional and attentional counterparts while being more computationally efficient. Finally, we introduce LambdaResNets, a family of LambdaNetworks, that considerably improve the speed-accuracy tradeoff of image classification models. LambdaResNets reach state-of-the-art accuracies on ImageNet while being ∼4.5x faster than the popular EfficientNets on modern machine learning accelerators.

Authors: Anonymous

Links:

YouTube: / yannickilcher

Twitter: / ykilcher

Discord: / discord

BitChute: https://www.bitchute.com/channel/yann...

Minds: https://www.minds.com/ykilcher

Parler: https://parler.com/profile/YannicKilcher

LinkedIn: / yannic-kilcher-488534136

If you want to support me, the best thing to do is to share out the content :)

If you want to support me financially (completely optional and voluntary, but a lot of people have asked for this):

SubscribeStar: https://www.subscribestar.com/yannick...

Patreon: / yannickilcher

Bitcoin (BTC): bc1q49lsw3q325tr58ygf8sudx2dqfguclvngvy2cq

Ethereum (ETH): 0x7ad3513E3B8f66799f507Aa7874b1B0eBC7F85e2

Litecoin (LTC): LQW2TRyKYetVC8WjFkhpPhtpbDM4Vw7r9m

Monero (XMR): 4ACL8AGrEo5hAir8A9CeVrW8pEauWvnp1WnSDZxW7tziCDLhZAGsgzhRQABDnFy8yuM9fWJDviJPHKRjV4FWt19CJZN9D4n